Runway Gen 3: The Ultimate Guide to AI Video Creation

Master Runway Gen 3 with our ultimate guide. Learn prompt engineering, keyframe control, and create stunning AI videos. Unlock your creativity today.

Master Runway Gen 3 with our ultimate guide. Learn prompt engineering, keyframe control, and create stunning AI videos. Unlock your creativity today.

Explore the latest trends and practical strategies of microservices architecture in 2026, helping you build scalable, resilient, and efficient software systems.

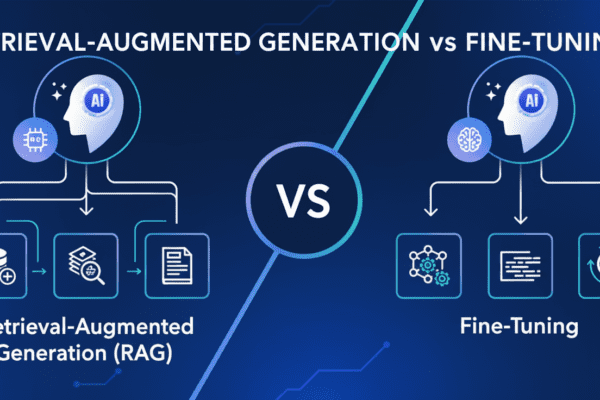

Navigating retrieval-augmented generation vs fine-tuning? Discover which AI strategy fits your project’s needs for accuracy, cost, and flexibility.

Discover how AI in DevOps (AIOps) predicts outages and automates incident response – actionable insights, real examples and best practices for IT teams.

Foldable phones have evolved fast, but are they truly ready for everyday users? This deep dive examines durability, software, battery life, and real-world value.

A sharp roundup of the most damaging technology failures, failed tech products, and IT industry mistakes, with the lessons every business should take seriously.

AWS (Amazon Web Services) has transformed cloud computing with a scalable infrastructure, high performance and cost efficiency that enables businesses to innovate and thrive. With global reach, robust security measures, and a pay-as-you-go model, AWS empowers organizations to accelerate digital transformation and remain competitive. Its success stories showcase the versatility and impact of AWS across diverse industries.

Discover how Voice Commerce transforms digital marketing through voice-activated shopping, voice SEO, and conversational commerce strategies.

Learn how to control spending across AWS, Azure, and Google Cloud with practical multi cloud cost management strategies, the best cloud cost tools, and proven ways to optimize cloud spend.

Discover how edge computing revolutionizes IoT by enabling real-time data processing for autonomous vehicles, smart factories, and healthcare.

Looking for Chrome alternatives? Compare the best modern browsers for speed, privacy, and AI features, including Brave, Firefox, Arc, Opera, and more.

Discover how agentic AI evolves into autonomous digital workers to boost productivity, transform IT operations, and reshape the workforce.

Amazon AWS EC2, has revolutionized cloud computing by providing enterprises with a scalable and cost-effective solution. Flexibility, cost effectiveness, security, and integration.